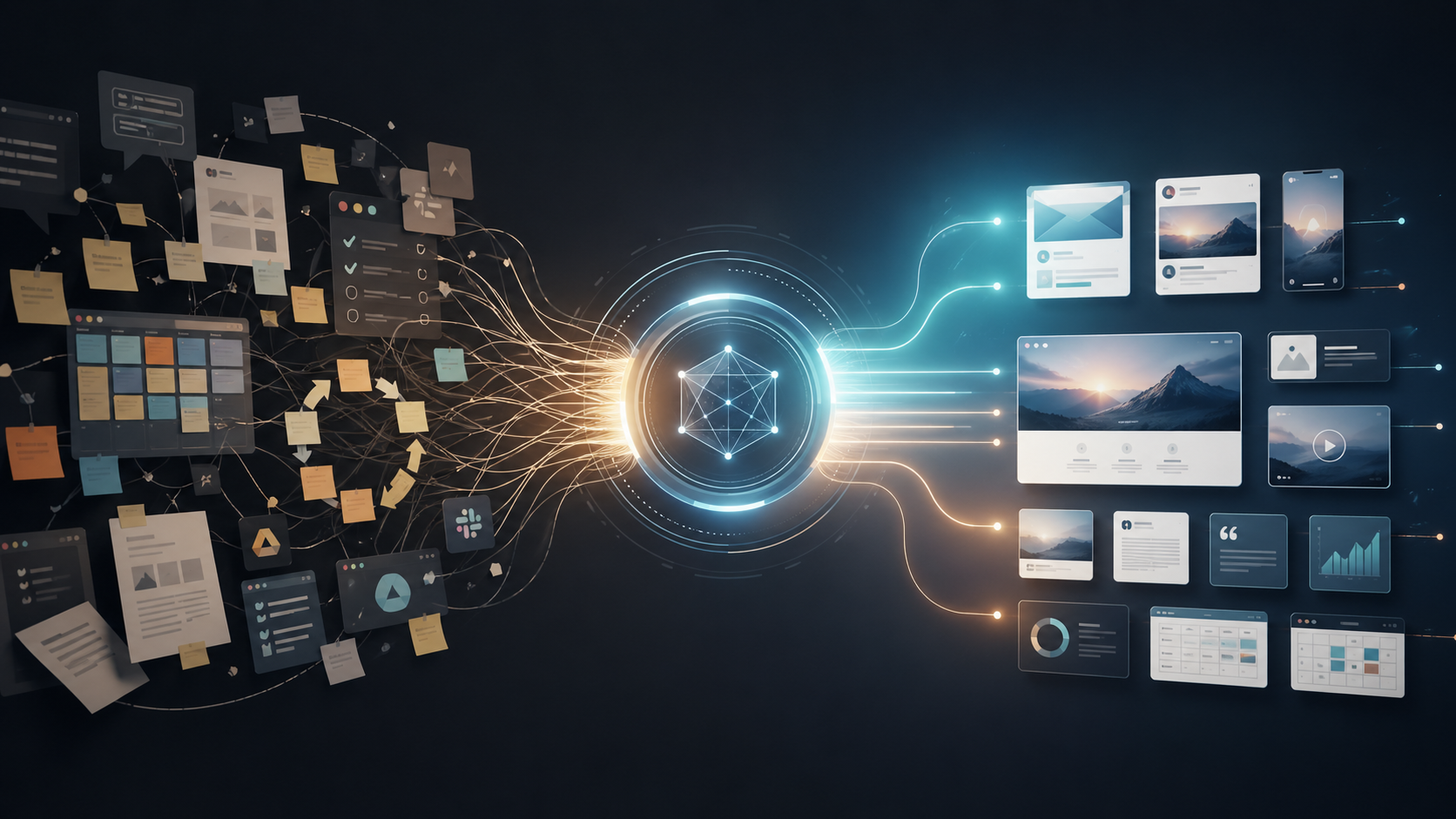

How Semantic OS built SEO Pipeline by connecting Search Console data, site content, optimization history, and search context into a system that remembers, reasons, and surfaces what to do next.

Google Search Console gives you the evidence. Your site content gives you the structure. Your optimization history gives you the memory. SEO Pipeline connects them into a living intelligence layer.

That matters because search is still enormous, but it is no longer simple. According to Statcounter, Google held 89.85% of worldwide search engine market share in March 2026. In parallel, Alphabet reported that Google Search & other revenue grew 17% year over year in Q4 2025. At the same time, Google said AI Overviews had scaled to 1.5 billion monthly users across 200 countries and territories, and Google Lens was being used by more than 1.5 billion people every month. Search is still the dominant discovery layer, but the way demand expresses itself inside that layer is becoming more AI-mediated, more multimodal, and more context-dependent.

Search Console gives evidence, not intelligence

Search Console is indispensable because it captures the core evidence stream: clicks, impressions, CTR, average position, query rows, and page rows. But Google’s own documentation frames it as a performance reporting system, not a reasoning system. It tells you what users saw and clicked. It does not, by itself, remember what changed on the site, explain why a cluster moved, or separate a content problem from a market shift. Google’s late-2025 launch of custom annotations is a strong signal that this gap is real: even inside Search Console, teams needed a better way to connect chart movement to specific changes and external events.

The reporting model also has practical limits that become painful at scale. Google says some queries are hidden for privacy, tables show only the most important rows, collected data is usually visible after a 2–3 day lag, and the Search Console API is limited to 50,000 rows per day per type per property. Bulk export into BigQuery gives a more complete dataset than the table view, but even that excludes anonymized queries. In other words, Search Console is excellent evidence, but it is still partial evidence, filtered through interface and pipeline constraints.

A query is not just a keyword. It is a signal of demand.

Why SEO data becomes hard to interpret at scale

Google explicitly says the queries shown in the Performance report are exact matches, and it advises using regex when similar searches fragment across many rows. That fragmentation is one reason Google introduced Query groups in Search Console Insights in October 2025. In Google’s own explanation, a single user question can appear through many variants, including misspellings, alternate phrasing, and different languages, and the new feature groups similar queries with AI because the old row-by-row view makes it tedious to identify the real underlying interest. That is the core scaling problem SEO Pipeline is designed to solve.

The complexity is not just volume. It is also the shape of search behavior itself. Google’s documentation now notes that a follow-up question in AI Mode counts as a new query in Search Console. Google also said in 2025 that AI Overviews reached 1.5 billion monthly users in 200 countries and territories and drove over 10% more usage in the categories of queries where they appear in major markets such as the U.S. and India. Separate from that, Google said more than 1.5 billion people use Lens every month to search what they see. A query is no longer only a typed keyword string. It can be iterative, visual, conversational, and session-based.

This is why modern SEO analysis breaks down when it stays trapped inside a dashboard mentality. Search volume is still concentrated enough that organic search remains mission-critical, but the demand landscape is too semantically messy and interface-rich to be managed well through isolated spreadsheets, filters, and screenshots. The operating problem is not that search disappeared. It is that demand now moves faster, branches more widely, and carries more context than the default reporting model can comfortably hold.

How we modeled the query universe

SEO Pipeline starts by treating every Search Console row as atomic evidence, not as the final unit of analysis. Exact-match queries become inputs into a larger “query universe” model that groups demand by intent, topic, modifier, stage, and adjacency. That is directionally consistent with Google’s own move toward Query groups, but the goal here is broader: not just a cleaner report, but a persistent internal map of how demand is structured around the business.

To make that possible, the system needs semantic memory, not just string matching. OpenAI documents that embeddings measure the relatedness of text strings and are commonly used for search, clustering, recommendations, and classification. Its Retrieval documentation further explains that semantic search can surface relevant results even when items share few or no keywords. Google’s Gemini embeddings documentation makes the same broader point from another angle: embeddings can support semantic search, clustering, and classification, and the latest Gemini embedding model maps text, images, video, audio, and documents into a unified embedding space across more than 100 languages. That is the technical basis for turning disconnected keyword rows into a meaning-aware demand model.

Once you treat queries that way, you can stop asking only “what keyword went up?” and start asking better questions: Which demand cluster is emerging? Which intent family is fragmenting? Which modifiers are attached to commercial, educational, or navigational needs? Which themes are rising without a strong content destination? Those are intelligence questions, not dashboard questions.

How we connected search demand to site content

The second half of the system is the content side. Search Console’s page dimension aggregates performance to final and canonical URLs, which is useful, but a canonical URL is still only a reporting surface. SEO Pipeline connects those URLs to the underlying content corpus so that pages are modeled not just as destinations but as semantic assets: pages, sections, entities, themes, templates, and internal relationships. Bulk export makes this possible operationally because Google provides a daily export path into BigQuery, where search evidence can be joined to a site’s content and metadata outside the Search Console interface.

That shifts the unit of work from URL accounting to semantic coverage. Instead of asking whether one URL ranked for one phrase, you can ask whether the site has an adequate semantic home for a cluster of demand, whether multiple pages are splitting the same intent, whether a page is attracting impressions for a topic it does not fully answer, and whether a content asset deserves expansion, consolidation, or repositioning. This is the difference between ranking inspection and search-intent mapping.

A page is not just a URL. It is part of a semantic structure.

Why optimization history and external context matter

Even a strong semantic model is incomplete if it has no memory. Google’s custom annotations launch in November 2025 was explicit about the problem: teams need to remember when they updated a template, hired an agency, changed content toward a new intent, migrated infrastructure, or reacted to an external event. Search Console added annotations because it is often difficult to remember exactly when a specific change happened and connect that date to what appears in the chart. SEO Pipeline takes that same idea further by treating every optimization, content revision, deployment, and strategic shift as part of the system’s memory, not as a note in someone’s head or a task in a disconnected project tracker.

This is operationally realistic because Search Console bulk export is already designed as an ongoing data pipeline. Google says exports run daily, the first export arrives within 48 hours of successful setup, and data can continue accumulating in the destination project unless retention rules are set. That means the raw ingredients for historical memory already exist. What changes in SEO Pipeline is that this history is attached to the semantic model: what changed, on which asset, against which intent cluster, at what moment, and with what downstream search effect.

External context belongs in that same layer because Search outcomes are not determined by on-page actions alone. Google’s Search Status Dashboard shows a dense cadence of ranking changes from March 2025 through March 2026: March 2025 core, June 2025 core, August 2025 spam, December 2025 core, February 2026 Discover, March 2026 spam, and March 2026 core updates. Google’s February 2026 Discover update was initially described as targeting English-language users in the U.S., with expansion to other countries and languages planned in the future. A serious search system has to remember these environmental shifts alongside site changes, or it will constantly confuse external turbulence for internal failure.

The broader discovery ecosystem is also changing fast enough that first-party search evidence alone is no longer sufficient context. Adobe reported that traffic from generative AI sources to U.S. retail websites in February 2025 was up 1,200% versus July 2024, while travel, leisure, and hospitality traffic was up 1,700%. By May 2025, Adobe said AI referrals to retail had doubled from February, with bounce rates 27% lower than non-AI traffic, time per visit 38% longer, and page views per visit 10% higher. During the 2025 holiday season, Adobe reported retail AI-driven traffic up 693% year over year and AI referrals converting 31% better than other traffic sources. When discovery behavior changes that quickly, SEO systems need market context, not just keyword charts.

An SEO action is not just a task. It is part of the system’s memory.

What SEO Pipeline surfaces and why this is a Search Intelligence Layer

When those layers are connected — Search Console evidence, site content, semantic similarity, optimization history, and external search context — the system can surface what a dashboard usually cannot: rising intent clusters with weak content coverage, high-impression themes that lack convincing CTR, pages competing for the same semantic demand, clusters whose movement aligns with a known site change, and traffic shifts that are more plausibly explained by a platform update than by a single-page regression. The output is not just more reporting. It is operational intelligence about what to do next and why.

That is why SEO Pipeline is not just an SEO dashboard. It is a productized Search Intelligence Layer. Google itself is gradually adding more context inside Search Console through features like Query groups and custom annotations, because the market clearly needs help moving from raw evidence to interpretable action. SEO Pipeline extends that logic across the whole operating system: it remembers, reasons, and connects evidence to structure and memory so decisions can be made in context, not in isolation. That is also what this proves about Semantic OS more broadly: an existing business data source can be transformed into a living intelligence system when data, memory, and reasoning are designed as one layer instead of separate tools.

In that sense, this article is a concrete companion to SEO Pipeline, What Is a Custom Intelligence Layer?, The Intelligence Layer Stack, and How Campaign Pipeline Turns Strategy Into Campaign Intelligence. The architecture is the point. The proof is that the same method can turn search data into search intelligence, and it can do the same for other business functions as well.

This is why SEO Pipeline is a concrete example of how a business function can become a custom intelligence layer.

Reference links

Selected source links for the current statistics and platform facts cited above:

- Google I/O 2025 announcement on AI Overviews scale and usage lift.

- Google AI Mode update on Lens usage and multimodal search behavior.

- Statcounter global search engine market share for March 2026.

- Alphabet Q4 2025 earnings release on Google Search & other growth.

- Search Console documentation on Performance report metrics and result variability.

- Search Console documentation on anonymized queries, data truncation, and bulk export completeness.

- Search Console API documentation on the 50,000-row daily export limit.

- Search Console documentation on reporting lag.

- Google Search Central announcement on Query groups in Search Console Insights.

- Google Search Central announcement on custom annotations.

- Google Search Status Dashboard ranking history and February 2026 Discover update.

- Adobe Analytics reports on AI-driven referral growth and engagement in 2025 and 2026.

Sources

- https://support.google.com/webmasters/answer/17011259?hl=en

- https://support.google.com/webmasters/answer/7576553?hl=en

- https://developers.openai.com/api/docs/guides/embeddings

- https://gs.statcounter.com/search-engine-market-share

- https://support.google.com/webmasters/answer/17010961?hl=en

- https://support.google.com/webmasters/answer/7042828?hl=en

- https://developers.google.com/search/blog/2025/10/search-console-query-groups

- https://developers.google.com/search/blog/2025/11/custom-chart-annotations

- https://support.google.com/webmasters/answer/12917675?hl=en

- https://status.search.google.com/products/rGHU1u87FJnkP6W2GwMi/history

- https://s206.q4cdn.com/479360582/files/doc_financials/2025/q4/2025q4-alphabet-earnings-release.pdf

- https://blog.adobe.com/en/publish/2025/03/17/adobe-analytics-traffic-to-us-retail-websites-from-generative-ai-sources-jumps-1200-percent

- https://blog.google/innovation-and-ai/products/google-io-2025-all-our-announcements/

- https://blog.google/products-and-platforms/products/search/google-search-ai-mode-update/

- https://support.google.com/webmasters/answer/12919192?hl=en

- https://support.google.com/webmasters/answer/17010575?hl=en